Predicting Drug Fate: A Comprehensive Guide to Modern QSAR and QSPR Models for Pharmacokinetic Properties

This article provides a comprehensive overview of Quantitative Structure-Activity Relationship (QSAR) and Quantitative Structure-Property Relationship (QSPR) models as critical tools for predicting the pharmacokinetic (ADME) profiles of drug candidates.

Predicting Drug Fate: A Comprehensive Guide to Modern QSAR and QSPR Models for Pharmacokinetic Properties

Abstract

This article provides a comprehensive overview of Quantitative Structure-Activity Relationship (QSAR) and Quantitative Structure-Property Relationship (QSPR) models as critical tools for predicting the pharmacokinetic (ADME) profiles of drug candidates. Aimed at researchers and drug development professionals, it covers foundational concepts, modern methodological approaches including machine learning, best practices for model troubleshooting and optimization, and rigorous validation and comparative analysis frameworks. The content synthesizes current best practices to guide the effective development and application of these predictive models in accelerating and de-risking the drug discovery pipeline.

QSAR/QSPR for ADME: Understanding the Core Concepts and Critical Pharmacokinetic Properties

Quantitative Structure-Activity Relationship (QSAR) and Quantitative Structure-Property Relationship (QSPR) are computational modeling methodologies that establish quantitative correlations between the chemical structure of compounds (described by molecular descriptors) and their biological activity (QSAR) or physicochemical properties (QSPR). Within pharmacokinetics (PK) research, these models are pivotal for predicting Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties, enabling the prioritization of lead compounds and reducing late-stage attrition in drug development.

Key Molecular Descriptors for Pharmacokinetic Prediction

Molecular descriptors are numerical representations of a molecule's structural and chemical features. The table below categorizes essential descriptors used in QSAR/QSPR models for PK properties.

Table 1: Key Molecular Descriptor Categories for PK-QSAR/QSPR Models

| Descriptor Category | Specific Examples | Relevance to Pharmacokinetic Properties |

|---|---|---|

| Hydrophobicity | LogP (octanol-water partition coefficient), LogD | Oral absorption, membrane permeation, plasma protein binding, volume of distribution. |

| Electronic | pKa, partial atomic charges, HOMO/LUMO energies | Solubility, ionization state at physiological pH, metabolic reactivity. |

| Steric/Topological | Molecular weight (MW), Topological Polar Surface Area (TPSA), molar refractivity, rotatable bond count | Membrane penetration (e.g., blood-brain barrier), oral bioavailability (Rule of Five), metabolic stability. |

| Geometric | Principal moments of inertia, molecular volume | Shape complementarity to enzymes or transporters involved in metabolism and disposition. |

| Quantum Chemical | Electrostatic potential maps, Fukui indices | Reactivity with metabolic enzymes (e.g., Cytochrome P450). |

| 3-Dimensional | Comparative Molecular Field Analysis (CoMFA) fields | Specific binding interactions for transporters or metabolizing enzymes. |

Application Notes & Protocols

Protocol: Developing a QSAR Model for CYP450 3A4-Mediated Metabolism

Objective: To build a robust QSAR model for predicting the rate of metabolism by the CYP3A4 isozyme.

Materials & Reagent Solutions:

Table 2: Research Reagent Solutions & Essential Materials

| Item | Function/Explanation |

|---|---|

| Chemical Dataset | Curated set of 150+ compounds with experimentally measured intrinsic clearance (CLint) for human CYP3A4. |

| Cheminformatics Software (e.g., RDKit, PaDEL-Descriptor) | To calculate 2D and 3D molecular descriptors from SMILES strings or molecular structures. |

| Data Analysis Platform (e.g., Python/R with scikit-learn, KNIME) | For data preprocessing, model training, validation, and statistical analysis. |

| Molecular Modeling Suite (e.g., OpenBabel, MOE) | For initial structure optimization, energy minimization, and conformational analysis. |

| Y-Scrambling Script | A custom script to perform Y-scrambling as a robustness test against chance correlation. |

Procedure:

- Data Curation & Preparation:

- Source experimental CLint values (µL/min/pmol P450) from peer-reviewed literature or proprietary assays. Log-transform the CLint values to create a normally distributed response variable (log(CLint)).

- Ensure chemical structure standardization (tautomer standardization, salt stripping, neutralization).

- Descriptor Calculation & Preprocessing:

- Calculate a wide range of molecular descriptors (e.g., ~1500 from PaDEL). Generate stable, low-energy 3D conformers for 3D descriptor calculation.

- Remove descriptors with zero or near-zero variance. Address missing values by imputation or removal.

- Apply correlation analysis to remove highly inter-correlated descriptors (e.g., |r| > 0.95).

- Dataset Division:

- Split the data into training set (≈70-80%) and an external test set (≈20-30%) using a rational method (e.g., Kennard-Stone) to ensure chemical space representativeness.

- Model Building & Variable Selection:

- On the training set, apply a variable selection algorithm (e.g., Genetic Algorithm, Stepwise Regression) coupled with a modeling method like Partial Least Squares (PLS) or Random Forest (RF).

- Use internal cross-validation (e.g., 5-fold CV) to prevent overfitting and determine the optimal number of descriptors/PLS components.

- Model Validation & Interpretation:

- Internal Validation: Report Q2 (cross-validated R2), RMSECV from the training set.

- External Validation: Apply the final model to the untouched test set. Report R2ext, RMSEext, and Concordance Correlation Coefficient (CCC).

- OECD Principle Compliance: Verify the model is associated with a defined endpoint, an unambiguous algorithm, and a defined domain of applicability. Perform Y-scrambling to confirm model significance.

- Application: Use the validated model to predict log(CLint) for novel virtual compounds in a lead optimization pipeline.

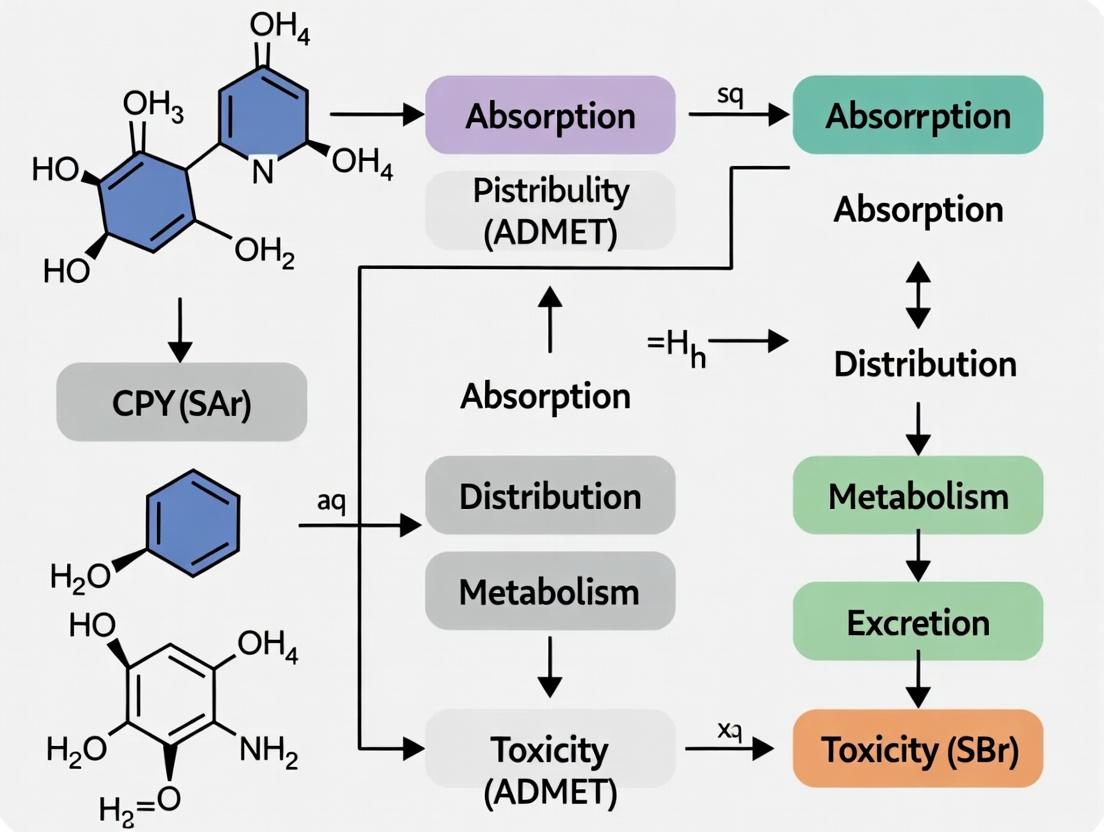

QSAR Modeling Workflow for PK Properties

Protocol: High-ThroughputIn SilicoPrediction of Human Oral Bioavailability (F%)

Objective: To implement a consensus QSPR model for rapid prioritization of compounds based on predicted human oral bioavailability.

Procedure:

- Define the Endpoint: Collect a high-quality dataset of human F% values from literature (e.g., Hou et al., J. Med. Chem., 2009).

- Multi-Descriptor Approach: Calculate descriptors from four key categories: 1D (MW, logP), 2D (TPSA, rotatable bonds), 3D (shadow indices), and quantum-chemical (H-bonding capacity).

- Consensus Modeling:

- Build individual models using different algorithms (e.g., Multiple Linear Regression (MLR), Support Vector Machine (SVM), Artificial Neural Network (ANN)) on the same training set.

- Determine the consensus prediction as the arithmetic mean of predictions from all individual models that pass an applicability domain check.

- Applicability Domain (AD) Definition:

- Implement the Leverage approach. For each new compound, calculate the hat value (hi). Define a threshold as h* = 3(p+1)/n, where p is the number of model descriptors and n is the number of training compounds. A compound with hi > h* is outside the AD.

- Deployment: Integrate the validated consensus model and AD check into a user-friendly web portal or pipeline script for medicinal chemists.

Consensus Modeling & Applicability Domain

Data Presentation: Model Performance Metrics

Table 3: Representative Performance of Published QSAR/QSPR Models for Key PK Properties

| PK Property | Model Type | Dataset Size (n) | Key Descriptors | Validation Performance (R² / Q²) | Reference (Year) |

|---|---|---|---|---|---|

| Human Oral Absorption (%) | PLS | 169 | TPSA, logD7.4, Rotatable Bonds | R²ext = 0.80 | Mol. Pharmaceutics (2021) |

| Blood-Brain Barrier Penetration (LogBB) | Gradient Boosting | 780 | logP, pKa, H-Bond Donors, Pglycoprotein substrate probability | Q² = 0.73, R²ext = 0.71 | J. Chem. Inf. Model. (2022) |

| Renal Clearance (CLr) | Random Forest | 302 | Molecular Charge, logP, PSA, MW | CCCext = 0.82 | Eur. J. Med. Chem. (2023) |

| Plasma Protein Binding (%) | ANN | 1213 | logP, logD, Acid/Base pKa, Ion Class | RMSEext = 12.5% | J. Cheminform. (2020) |

| CYP3A4 Inhibition (pIC50) | SVM | 5010 | ECFP6 Fingerprints, logP, TPSA | BA = 0.89 (External) | Bioinformatics (2023) |

BA = Balanced Accuracy; R²ext/CCCext = External Test Set Metrics.

Integration into Drug Discovery Workflow

The role of QSAR/QSPR models is integrated early and iteratively in modern drug discovery.

Integration of QSAR/QSPR in Drug Discovery

The quantitative prediction of Absorption, Distribution, Metabolism, and Excretion (ADME) properties is a cornerstone of modern drug discovery. Within the framework of Quantitative Structure-Activity Relationship (QSAR) and Quantitative Structure-Property Relationship (QSPR) modeling, ADME parameters serve as critical endpoints. Accurate in silico models can significantly reduce late-stage attrition by prioritizing compounds with favorable pharmacokinetic profiles. This application note details experimental protocols and key data for generating high-quality input data for such models.

Absorption

Absorption describes the passage of a drug from its site of administration into systemic circulation. Key assays focus on permeability and solubility.

Key Research Reagent Solutions

| Reagent/Material | Function in Absorption Studies |

|---|---|

| Caco-2 Cell Line | Human colon adenocarcinoma cells; form polarized monolayers for predicting intestinal permeability. |

| PAMPA Lipid System | Artificial membrane for high-throughput passive permeability screening. |

| FaSSIF/FeSSIF Media | Biorelevant media simulating fasted & fed state intestinal fluids for solubility measurement. |

| MDCK-MDR1 Cells | Madin-Darby Canine Kidney cells transfected with human MDR1 gene (P-gp) to assess efflux. |

Protocol 1.1: Caco-2 Permeability Assay

Objective: To determine the apparent permeability (Papp) of a test compound in the apical-to-basolateral (A-B) and basolateral-to-apical (B-A) directions.

- Cell Culture: Seed Caco-2 cells at high density (~100,000 cells/cm²) on collagen-coated Transwell inserts (0.4 μm pore). Culture for 21-23 days, changing medium every 2-3 days, until transepithelial electrical resistance (TEER) > 300 Ω·cm².

- Assay Buffer: Prepare Hanks' Balanced Salt Solution (HBSS) buffered with 10 mM HEPES, pH 7.4.

- Dosing Solution: Prepare test compound at 10 μM in assay buffer (from DMSO stock, ensure final DMSO <0.5%).

- Experiment:

- Aspirate media and wash monolayers twice with pre-warmed HBSS.

- Add dosing solution to the donor compartment (A or B). Add fresh buffer to the receiver compartment.

- Incubate at 37°C, 5% CO₂ with mild agitation.

- Sample 100 μL from the receiver side at t=30, 60, 90, and 120 min, replacing with fresh buffer.

- Analysis: Quantify compound concentration in samples via LC-MS/MS. Calculate Papp (cm/s):

Papp = (dQ/dt) / (A * C₀)- where dQ/dt is the transport rate, A is the membrane area, and C₀ is the initial donor concentration.

- Data for QSAR: Calculate Efflux Ratio = Papp(B-A) / Papp(A-B). An efflux ratio >2 suggests active efflux.

Table 1: Representative Caco-2 Permeability Data for Model Building

| Compound Class | Log P | Papp (A-B) (x10⁻⁶ cm/s) | Papp (B-A) (x10⁻⁶ cm/s) | Efflux Ratio | Human Fa (%) |

|---|---|---|---|---|---|

| High Permeability (Metoprolol) | 1.8 | 25.3 ± 3.1 | 28.1 ± 4.0 | 1.1 | ~95% |

| Low Permeability (Atenolol) | 0.2 | 1.5 ± 0.4 | 1.7 ± 0.3 | 1.1 | ~50% |

| Efflux Substrate (Loperamide) | 4.9 | 4.2 ± 1.1 | 35.6 ± 5.7 | 8.5 | ~<10% |

Diagram Title: Caco-2 Assay Transport Pathways

Distribution

Distribution involves the reversible transfer of a drug between blood and tissues. Volume of distribution (Vd) and plasma protein binding (PPB) are key parameters.

Protocol 2.1: Equilibrium Dialysis for Plasma Protein Binding

Objective: To determine the fraction of drug bound to plasma proteins (fu).

- Equipment: 96-well equilibrium dialysis device with semi-permeable membranes (MWCO 12-14 kDa).

- Preparation: Pre-soak membranes in deionized water for 15 min, then in dialysis buffer for 5 min.

- Loading: Add 150 μL of plasma (human, rat, etc.) spiked with test compound (typically 5 μM) to the donor chamber. Add 150 μL of phosphate buffer (pH 7.4) to the receiver chamber.

- Incubation: Seal the plate and incubate at 37°C with gentle orbital shaking for 4-6 hours to reach equilibrium.

- Sampling: Post-incubation, aliquot 50 μL from both donor and receiver chambers. For donor (plasma) samples, add an equal volume of blank buffer. For receiver (buffer) samples, add an equal volume of blank plasma.

- Analysis: Analyze all samples by LC-MS/MS to determine compound concentrations [D] and [R].

- Calculation: Fraction unbound (fu) = [R] / [D]. % Bound = (1 - fu) x 100.

Table 2: Distribution Property Data for Model Compounds

| Compound | Log D₇.₄ | PPB (% Bound) | Reported Vd (L/kg) | Primary Tissue Binder |

|---|---|---|---|---|

| Warfarin | 1.4 | 99.0 ± 0.2 | 0.14 | Albumin |

| Propranolol | 1.2 | 87.0 ± 2.5 | 4.0 | α1-Acid Glycoprotein |

| Digoxin | 1.8 | 23.0 ± 5.0 | 6.0 | Tissue (Na⁺/K⁺ ATPase) |

| Chloroquine | 4.9 | 55.0 ± 8.0 | 200-800 | Lysosomes |

Metabolism

Metabolism involves enzymatic modification of the drug, primarily by hepatic cytochromes P450 (CYPs), leading to inactivation or activation.

Key Research Reagent Solutions

| Reagent/Material | Function in Metabolism Studies |

|---|---|

| Human Liver Microsomes (HLM) | Subcellular fraction containing membrane-bound CYPs and UGTs for intrinsic clearance assays. |

| Recombinant CYP Isozymes | Individual CYP enzymes (CYP3A4, 2D6, etc.) for reaction phenotyping. |

| CYP-specific Inhibitors | e.g., Ketoconazole (CYP3A4), Quinidine (CYP2D6) for inhibition studies. |

| NADPH Regenerating System | Supplies essential cofactor (NADPH) for oxidative reactions. |

Protocol 3.1: Microsomal Intrinsic Clearance (CLint)

Objective: To determine the in vitro half-life (t₁/₂) and intrinsic clearance of a compound.

- Incubation Cocktail: Prepare 0.5 mg/mL HLM in 100 mM phosphate buffer (pH 7.4) with 3.3 mM MgCl₂. Pre-incubate at 37°C for 5 min.

- Reaction Initiation: Add test compound (final [1 μM]) and immediately add the NADPH regenerating system (final 1 mM NADP⁺, 3.3 mM G6P, 0.4 U/mL G6PDH). Start timer.

- Time Points: Withdraw aliquots (e.g., 50 μL) at t=0, 5, 10, 20, 30, and 60 min. Immediately quench each aliquot with an equal volume of ice-cold acetonitrile containing internal standard.

- Processing: Vortex, centrifuge (≥3000g, 10 min), and analyze supernatant by LC-MS/MS for parent compound remaining.

- Data Analysis: Plot Ln(% parent remaining) vs. time. Slope = -k (elimination rate constant).

- In vitro t₁/₂ = 0.693 / k

- CLint (μL/min/mg protein) = (0.693 / t₁/₂) * (Incubation Volume / Protein Mass)

Diagram Title: Primary Hepatic Metabolism Pathways

Excretion

Excretion is the removal of the drug and its metabolites from the body, primarily via urine (renal) or bile (hepatic).

Protocol 4.1: Biliary Excretion Using Sandwich-Cultured Hepatocytes

Objective: To assess the potential for biliary excretion and identify transporter involvement.

- Hepatocyte Culture: Seed primary hepatocytes (human/rat) on collagen-coated plates. Overlay with Matrigel on day 2 to form canalicular networks.

- Experimental Groups: Day 5: Set up two conditions: Standard Buffer (canaliculi open) and Ca²⁺-free Buffer (disrupted tight junctions, canaliculi collapsed).

- Dosing & Uptake: Incubate hepatocytes with test compound (2-5 μM) in standard buffer for 10 min at 37°C.

- Accumulation Phase: Replace with fresh compound-containing buffer for 30 min. For the Ca²⁺-free group, wash and incubate with Ca²⁺-free buffer 10 min prior to this step.

- Wash & Lysis: Wash cells rapidly with ice-cold buffer. Lyse cells with 70% methanol/water.

- Analysis: Measure intracellular drug accumulation by LC-MS/MS.

- Calculation: Biliary Excretion Index (BEI%) = (1 - [Accumulation in Ca²⁺-free / Accumulation in Standard]) x 100. The difference represents compound trapped in intact canaliculi.

Table 3: Key Pharmacokinetic Parameters from Standard Studies

| PK Parameter | Typical In Vivo Study (Rat) | Common In Vitro Assay | Key for QSAR Modeling |

|---|---|---|---|

| Bioavailability (F%) | IV & PO dosing, plasma AUC | Caco-2 Papp, HLM CLint | Predicts oral absorption & first-pass effect. |

| Volume of Distribution (Vd) | IV bolus, plasma PK | PPB, Log P/D, in vitro tissue binding | Predicts tissue penetration. |

| Clearance (CL) | IV infusion, plasma PK | HLM/ Hepatocyte CLint | Predicts elimination rate & half-life. |

| Half-life (t₁/₂) | Derived from Vd & CL | Composite from CLint & PPB | Predicts dosing frequency. |

Diagram Title: ADME Data in QSAR Modeling Workflow

Within the broader thesis on Quantitative Structure-Activity/Property Relationship (QSAR/QSPR) models for pharmacokinetic (PK) research, the selection of molecular descriptors is foundational. These numerical representations of molecular structure are critical for predicting ADME properties (Absorption, Distribution, Metabolism, Excretion). This document provides detailed application notes and protocols for calculating and utilizing four primary descriptor classes—Topological, Electronic, Geometric, and 3D—in PK prediction workflows.

Topological Descriptors

Topological descriptors are derived from the 2D molecular graph, encoding information about atom connectivity and branching. They are computationally inexpensive and invariant to molecular conformation.

Key Parameters & PK Relevance:

- Wiener Index: Correlates with molecular volume and boiling point, used in predicting membrane permeability.

- Randic Connectivity Indices (χ): Related to molecular surface area and van der Waals interactions; predictive for lipophilicity and blood-brain barrier penetration.

- Kier & Hall Molecular Connectivity Indices: Describe shape and branching; useful for modeling volume of distribution and clearance.

- Balaban Index (J): A distance-based index sensitive to cyclicity; correlates with stability and metabolic reactivity.

Electronic Descriptors

Electronic descriptors quantify the distribution of electrons, crucial for modeling interactions like hydrogen bonding, polarization, and reactivity with metabolizing enzymes.

Key Parameters & PK Relevance:

- Partial Atomic Charges (e.g., Gasteiger-Marsili): Determine electrostatic interaction potentials, influencing protein binding and passive diffusion.

- Highest Occupied & Lowest Unoccupied Molecular Orbital Energies (EHOMO, ELUMO): Indicate electron-donating/accepting potential; predictive for metabolic oxidation and reduction pathways.

- Molecular Dipole Moment: Influences solubility and interaction with aqueous environments and transporter proteins.

- Fukui Indices: Describe site-specific reactivity for electrophilic/nucleophilic attack, directly applicable to predicting sites of metabolism (SoM).

Geometric Descriptors

Geometric descriptors are calculated from the 3D molecular structure but are invariant to rotation and translation. They describe size and shape.

Key Parameters & PK Relevance:

- Principal Moments of Inertia (Ia, Ib, Ic): Describe the overall molecular shape (rod-, disc-, or sphere-like), influencing packing in crystal lattices (solubility) and fit into enzyme active sites.

- Molecular Surface Areas (SAS, SASpolar, SAShydrophobic): Solvent-accessible surface areas correlate strongly with hydrophobicity (log P), hydration energy, and permeability.

- Gravitational Index: Related to the distribution of mass in space; used in models for protein-ligand binding affinity.

3D Descriptors (Conformation-Dependent)

3D descriptors capture spatial information, including pharmacophoric features and interaction fields, and are highly sensitive to molecular conformation.

Key Parameters & PK Relevance:

- Comparative Molecular Field Analysis (CoMFA) Fields: Steric and electrostatic interaction energies calculated at grid points; extensively used in 3D-QSAR for receptor affinity and metabolic stability.

- WHIM Descriptors (Weighted Holistic Invariant Molecular): Capture size, shape, symmetry, and atom distribution; applicable to bioavailability modeling.

- Radial Distribution Function (RDF) Codes: Encode distance-dependent atom density; useful for modeling nonspecific interactions in distribution processes.

- Pharmacophore Feature Points: Distances and angles between hydrogen bond donors/acceptors, hydrophobic centers, and aromatic rings; critical for predicting substrate specificity for transporters and CYP450 isoforms.

Table 1: Summary of Key Molecular Descriptors for Primary PK Properties

| PK Property | Topological Descriptors | Electronic Descriptors | Geometric Descriptors | 3D Descriptors |

|---|---|---|---|---|

| Lipophilicity (log P) | Randic Connectivity Indices, Molecular ID Number | Partial Charge, Dipole Moment | Molecular Surface Area (SAS) | CoMFA Steric/Elec. Fields |

| Aqueous Solubility | Balaban Index, Kappa Shape Indices | HOMO/LUMO, Sum of Absolute Charge | Solvent-Accessible Surface Area | RDF Codes, WHIM Descriptors |

| BBB Permeability | Wiener Index, Polar Surface Area (2D) | Hydrogen Bond Donor/Acceptor Count | Principal Moments of Inertia | Pharmacophore Distance Features |

| Metabolic Stability | Molecular Complexity Indices | Fukui Indices, HOMO Energy | -- | GRID/MIF Interaction Energies |

| Plasma Protein Binding | Number of Rotatable Bonds | Partial Charge on Aromatic Atoms | Hydrophobic Surface Area (SAS_h) | 3D Molecular Shape Similarity |

| Volume of Distribution | Kier Hall Indices | -- | Molecular Volume | -- |

Experimental Protocols

Protocol 3.1: Calculation of a Comprehensive Descriptor Set Using Open-Source Tools

Objective: To generate topological, electronic, geometric, and 3D descriptors for a library of compounds in SDF format using RDKit and PaDEL-Descriptor.

Materials: See "The Scientist's Toolkit" (Section 5).

Procedure:

- Input Preparation: Prepare a single SDF file containing the 2D or 3D structures of all compounds. Ensure structures are protonated correctly for the physiological pH of interest (typically pH 7.4).

- Descriptor Calculation with RDKit (Python Script):

- Descriptor Calculation with PaDEL-Descriptor (Command Line):

- Post-Processing: Merge descriptor sets. Remove columns with zero variance or >20% missing values. Impute missing values using median or k-nearest neighbors. Standardize or normalize the data.

Protocol 3.2: Workflow for PK Prediction Using a Multi-Descriptor QSAR Model

Objective: To build a predictive model for Human Intestinal Absorption (HIA) using a curated set of molecular descriptors.

Procedure:

- Data Curation: Obtain a dataset of compounds with reliable experimental %HIA values. Split data into training (70%), validation (15%), and test (15%) sets.

- Descriptor Calculation & Selection: Generate descriptors as per Protocol 3.1. Perform feature selection using the training set only (to avoid data leakage). Use methods like:

- Variance Threshold: Remove low-variance descriptors.

- Correlation Analysis: Remove one from any pair with Pearson correlation >0.95.

- Feature Importance: Use Random Forest or LASSO regression to select the top 30-50 most informative descriptors.

- Model Building: Train multiple algorithms (e.g., Random Forest, Support Vector Machine, Gradient Boosting) on the training set using the selected descriptors.

- Model Validation: Tune hyperparameters using the validation set via grid search. Apply the final model to the held-out test set. Report key metrics: R², Q² (cross-validated R²), RMSE, and MAE.

- Applicability Domain (AD) Definition: Use methods like leverage (Williams plot) or distance-based measures (e.g., Euclidean distance in descriptor space) to define the model's AD. Flag predictions for compounds outside the AD as less reliable.

Visualization of Workflows and Relationships

QSAR Model Development Workflow for PK Prediction

Mapping Descriptor Classes to ADME Properties

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software and Resources for Molecular Descriptor Calculation

Item/Category

Specific Tool/Resource Example

Function in PK Descriptor Research

Cheminformatics Suites

RDKit (Open Source), OpenBabel

Core library for molecule manipulation, 2D descriptor calculation, and fingerprint generation.

Descriptor Calculators

PaDEL-Descriptor, Dragon (Commercial)

Generate thousands of topological, electronic, and 2D/3D descriptors from structure files.

Conformer Generators

OMEGA (OpenEye), CONFGEN (Schrödinger)

Generate biologically relevant, low-energy 3D conformers essential for 3D and geometric descriptors.

Quantum Chemistry

Gaussian, GAMESS, ORCA

Calculate high-accuracy electronic descriptors (HOMO/LUMO, Fukui indices, MEP).

Molecular Modeling

AutoDock Vina, Schrodinger Maestro

Perform docking and generate interaction fields for advanced 3D descriptor derivation.

Data & Benchmark Sets

ChEMBL, PK-DB, ADME SARfari

Public repositories for obtaining experimental PK data for model training and validation.

Programming Environment

Python (Jupyter, pandas, scikit-learn)

Environment for scripting descriptor pipelines, data analysis, and machine learning modeling.

The predictive accuracy of Quantitative Structure-Activity/Property Relationship (QSAR/QSPR) models for pharmacokinetic properties (Absorption, Distribution, Metabolism, and Excretion - ADME) is fundamentally dependent on the quality, quantity, and relevance of the underlying experimental data. This document provides application notes and detailed protocols for sourcing and utilizing high-quality ADME data from key public repositories, framed within the thesis that robust data curation is the cornerstone of reliable predictive modeling in drug development.

Key Public ADME Data Repositories: A Comparative Analysis

The following table summarizes essential datasets and repositories, highlighting their scope, data types, and utility for QSAR/QSPR modeling.

Table 1: Core Public Repositories for Experimental ADME Data

| Repository Name | Primary Focus & Data Type | Key Metrics & Volume (Approx.) | Direct Utility for QSAR/QSPR |

|---|---|---|---|

| ChEMBL | Bioactivity, ADME, & physicochemical data from literature. | >2M compounds, >1.4M ADME datapoints (e.g., LogD, solubility, hepatic clearance). | High. Well-annotated, standardized data suitable for large-scale model training. |

| PubChem BioAssay | Bioactivity screening results, including some ADME-relevant assays. | >1M bioassays; subsets for P-gp inhibition, CYP450 inhibition. | Moderate. Requires careful curation to extract specific ADME endpoints. |

| DrugBank | Comprehensive drug data including ADME parameters for approved drugs. | ~14K drug entries; curated PK parameters (half-life, clearance, etc.). | High for benchmark datasets. Gold-standard data for approved molecules. |

| PK/DB (Perlstein Lab) | Curated pharmacokinetic data for small molecules in humans & animals. | ~1,300 compounds with human CL, Vd, F, t1/2. | Very High. Focused purely on in vivo PK parameters for modeling. |

| OpenADMET | Curated ADME properties from diverse sources with standardized formats. | ~500K compounds for 10+ properties (e.g., Caco-2, Pgp-inhibition). | High. Pre-filtered for ADME modeling, includes predictive challenges. |

Application Note: Constructing a Curated CYP3A4 Inhibition Dataset from ChEMBL

Objective: To build a high-confidence dataset for training a QSAR model of Cytochrome P450 3A4 inhibition.

Protocol:

- Data Retrieval: Access the ChEMBL database via its web interface or API.

- Assay Selection: Query for target

CHEMBL340(CYP3A4). Filter forASSAY_TYPE='B'(binding) andRELATION='='(exact measurement). - Data Filtering:

- Retain only records with standard

IC50,Ki, or% Inhibitionvalues. - Apply a confidence score filter:

CONFIDENCE_SCORE >= 8. - Remove duplicates by

CHEMBL_COMPOUND_ID, keeping the geometric mean of multiple values. - Convert all values to nM units and subsequently to pIC50 (-log10(IC50 in M)).

- Retain only records with standard

- Structural Curation: Download canonical SMILES for each compound. Standardize structures using toolkit (e.g., RDKit): neutralize charges, remove salts, generate tautomer representatives.

- Final Dataset: The resulting table should contain columns:

Compound_ID,Standard_SMILES,pIC50_Mean,Measurement_Count.

Diagram 1: Data Curation Workflow for QSAR

Experimental Protocols for Key ADME Assays

Sourced data must be understood in the context of the original experimental methods.

Protocol 4.1: Parallel Artificial Membrane Permeability Assay (PAMPA) Purpose: High-throughput measurement of passive transcellular permeability. Detailed Methodology:

- Plate Preparation: A 96-well microfilter plate is coated with 5 µL of a lipid solution (e.g., 2% lecithin in dodecane) to form the artificial membrane.

- Donor Solution: Add 150 µL of test compound solution (e.g., 100 µM in pH 7.4 buffer) to the donor plate.

- Acceptor Solution: Place the membrane plate on top of an acceptor plate containing 300 µL of pH 7.4 buffer (or a sink buffer).

- Incubation: Assemble the sandwich and incubate at 25°C for 4-16 hours without agitation.

- Analysis: Quantify compound concentration in both donor and acceptor wells using UV spectroscopy or LC-MS/MS.

- Calculation: Permeability (Pe, cm/s) is calculated using:

Pe = -{ln(1 - 2C_A/(C_D + C_A))} * V_D / (A * t * (C_D + C_A)), where CA and CD are acceptor/donor concentrations, V_D is donor volume, A is filter area, and t is time.

Protocol 4.2: Human Liver Microsome (HLM) Stability Assay Purpose: Determine metabolic stability (half-life, intrinsic clearance) of a compound. Detailed Methodology:

- Incubation Mix: Prepare 195 µL of incubation mixture containing 0.5 mg/mL HLM protein in 100 mM potassium phosphate buffer (pH 7.4) with 2 mM MgCl2. Pre-incubate for 5 min at 37°C.

- Reaction Initiation: Start the reaction by adding 5 µL of NADPH regenerating system (final: 1 mM NADP+, 5 mM glucose-6-phosphate, 1 U/mL G6P dehydrogenase).

- Time Course Sampling: At times t = 0, 5, 10, 20, 30, 45 min, withdraw 25 µL aliquots and quench in 100 µL of cold acetonitrile with internal standard.

- Sample Processing: Centrifuge at 3000xg for 15 min to precipitate proteins. Analyze supernatant by LC-MS/MS.

- Data Analysis: Plot remaining parent compound (%) vs. time. Determine first-order decay rate constant (k) and calculate in vitro half-life:

t_{1/2} = ln(2)/k. Intrinsic clearance (CL_int) is:CL_{int} = (0.693 / t_{1/2}) * (Incubation Volume / Microsomal Protein).

Diagram 2: HLM Assay Metabolic Pathway

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents for Featured ADME Assays

| Item/Category | Function & Application | Example Product/Specification | |

|---|---|---|---|

| Human Liver Microsomes (HLM) | Source of cytochrome P450 and other drug-metabolizing enzymes for in vitro stability assays. | Pooled, mixed-gender, 20-donor pool. | p>150 pmol/mg total CYP450. |

| Caco-2 Cell Line | Human colon adenocarcinoma cells that differentiate into enterocyte-like monolayers for permeability studies. | ATCC HTB-37. Passage number 25-45 for optimal differentiation. | |

| PAMPA Lipid Solution | Forms the artificial membrane in PAMPA assays to model passive transcellular permeability. | 2% (w/v) Phosphatidylcholine in Dodecane. | |

| NADPH Regenerating System | Provides constant supply of NADPH cofactor for oxidative metabolism in microsomal assays. | System A: NADP+, Glucose-6-Phosphate, MgCl2, and G6P Dehydrogenase. | |

| LC-MS/MS System | Gold-standard for quantification of parent compound and metabolites in complex biological matrices. | Triple quadrupole mass spectrometer coupled to UHPLC. |

The Evolution from Classical Linear Models to Modern AI-Driven Approaches

Within Quantitative Structure-Activity/Property Relationship (QSAR/QSPR) modeling for pharmacokinetic (PK) properties, the methodological shift from interpretable linear frameworks to complex, high-dimensional artificial intelligence (AI) models represents a paradigm shift. This evolution addresses the need to model complex, non-linear biological systems governing absorption, distribution, metabolism, excretion, and toxicity (ADMET), ultimately accelerating drug candidate optimization.

Chronological Methodological Evolution & Quantitative Performance

Table 1: Comparison of Modeling Approaches for PK-QSAR

| Era & Model Type | Typical Algorithm(s) | Key Advantages | Key Limitations | Reported Performance (e.g., CYP450 Inhibition Prediction) |

|---|---|---|---|---|

| Classical Linear (1990s-2000s) | Multiple Linear Regression (MLR), Partial Least Squares (PLS) | High interpretability, low computational cost, minimal overfitting risk. | Cannot capture non-linear relationships, limited to few descriptors, poor for complex endpoints. | Accuracy: ~65-75%; R²: 0.6-0.7 |

| Early Non-Linear & Machine Learning (2000s-2010s) | Support Vector Machines (SVM), Random Forest (RF), k-Nearest Neighbors (kNN) | Captures non-linearity, handles more descriptors, better predictive power. | "Black-box" nature emerges, risk of overfitting without careful validation. | Accuracy: ~78-85%; R²: 0.75-0.82 |

| Modern Deep Learning (2010s-Present) | Graph Neural Networks (GNNs), Convolutional Neural Networks (CNNs), Transformers | Learns features directly from molecular structure (SMILES, graphs), models highly complex relationships. | High data/computational demand, extreme "black-box," requires large datasets. | Accuracy: ~88-92%; R²: 0.85-0.92 |

Experimental Protocols

Protocol 3.1: Building a Classical PLS Model for LogP Prediction

Objective: To predict octanol-water partition coefficient (LogP) using molecular descriptor-based PLS regression.

- Dataset Curation: Curate a set of 500-1000 drug-like molecules with experimentally measured LogP values from sources like ChEMBL. Apply a 70/30 training/test split.

- Descriptor Calculation: Using software like RDKit or PaDEL-Descriptor, calculate 1D and 2D molecular descriptors (e.g., molecular weight, topological polar surface area, counts of donors/acceptors). Standardize all descriptors.

- Feature Selection: Apply Variance Threshold (remove low-variance descriptors) and Pearson Correlation (remove highly correlated pairs, |r| > 0.95).

- Model Training: Using Scikit-learn, fit a PLS regression model on the training set. Determine optimal number of components via 10-fold cross-validation.

- Validation: Predict LogP for the hold-out test set. Report R², Root Mean Square Error (RMSE), and Mean Absolute Error (MAE).

Protocol 3.2: Implementing a Graph Neural Network for Intrinsic Clearance Prediction

Objective: To predict human hepatic intrinsic clearance (CLint) directly from molecular graph representation.

- Data Preparation: Source in vitro CLint data (e.g., human liver microsomal stability). Represent each molecule as a graph: atoms as nodes (featurized with atomic number, degree, hybridization), bonds as edges (featurized with type).

- Model Architecture: Implement a Message Passing Neural Network (MPNN) using PyTorch Geometric. Architecture includes:

- Three message-passing layers to aggregate neighbor information.

- A global mean pooling layer to generate a molecule-level embedding.

- Two fully connected layers (ReLU activation, Dropout=0.2) leading to a single output node.

- Training Loop: Use Mean Squared Error loss and Adam optimizer. Train for 500 epochs with early stopping. Employ a separate validation set for hyperparameter tuning (learning rate, hidden layer dimension).

- Evaluation: Assess model on test set using RMSE, MAE, and calculate the fraction of predictions within 2-fold error.

Visualization of Key Concepts

QSAR Modeling Paradigm Shift

GNN Architecture for PK Prediction

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Resources for Modern AI-Driven PK-QSAR Research

| Category | Specific Tool/Resource | Function & Application in PK Modeling |

|---|---|---|

| Cheminformatics & Descriptors | RDKit, MOE, PaDEL-Descriptor | Generates classical molecular descriptors (topological, electronic) for traditional QSAR and initial feature sets. |

| High-Quality PK Data | ChEMBL, PK-DB, DrugBank | Provides curated, experimental ADMET/PK data for model training and benchmarking. |

| Deep Learning Frameworks | PyTorch (with PyTorch Geometric), TensorFlow (with DeepChem) | Enables building and training custom neural network architectures (GNNs, CNNs) for end-to-end learning. |

| Pre-trained AI Models | ChemBERTa, MoleculeNet Benchmarks | Offers transfer learning starting points, reducing data requirements for specific PK endpoint prediction. |

| Model Validation Platforms | KNIME, Orange Data Mining, Scikit-learn | Provides robust workflows for data splitting, cross-validation, and application of OECD QSAR validation principles. |

| Computational Infrastructure | Google Colab Pro, AWS SageMaker, NVIDIA GPUs | Delivers the necessary computational power (GPUs) for training large, data-hungry deep learning models. |

Building Predictive Models: Methodologies, Algorithms, and Practical Applications in Drug Discovery

Application Notes

This protocol provides a comprehensive, reproducible workflow for constructing Quantitative Structure-Activity/Property Relationship (QSAR/QSPR) models with a specific focus on pharmacokinetic (PK) properties, such as absorption, distribution, metabolism, excretion, and toxicity (ADMET). Within the broader thesis of accelerating drug discovery, robust QSAR/QSPR models serve as indispensable in silico tools for early-stage PK profiling, reducing costly late-stage attrition. The workflow emphasizes data integrity, computational transparency, and model validation to ensure reliable predictions for novel chemical entities.

Detailed Protocols

Phase I: Data Curation & Preparation

Objective: To assemble a high-quality, chemically diverse, and reliably labeled dataset of compounds with associated experimental PK property data.

Protocol:

- Source Identification: Query public databases (e.g., ChEMBL, PubChem, DrugBank) and proprietary sources using targeted searches (e.g., "human clearance," "Caco-2 permeability," "plasma protein binding").

- Data Aggregation: Compound structures (typically SMILES strings) and corresponding numerical PK endpoint values (e.g., logD, half-life, IC50 for metabolic enzymes) are extracted.

- Standardization: Apply chemical standardization rules using toolkits like RDKit or OpenBabel:

- Remove salts, solvents, and duplicates.

- Standardize tautomers and nitro groups.

- Generate canonical SMILES.

- Check for and correct invalid structures.

- Endpoint Curation: Harmonize units, identify and reconcile conflicting measurements for the same compound, and apply consistent log transformations where appropriate.

- Activity Thresholding: For classification models (e.g., high vs. low permeability), apply scientifically justified thresholds to continuous data.

- Chemical Space Analysis: Apply dimensionality reduction (e.g., PCA on simple descriptors) to visualize dataset coverage and identify potential clusters or outliers.

Key Data Table: Table 1: Example Curated Dataset for Human Oral Bioavailability (%F)

| Compound ID | SMILES | Experimental %F (Mean) | SD | Number of Measurements | Source Database |

|---|---|---|---|---|---|

| CID_12345 | CC(=O)Oc1... | 85.2 | 3.1 | 5 | ChEMBL 33 |

| CID_67890 | CN1CCC... | 45.7 | 5.6 | 3 | PubChem AID 1524 |

| CID_11223 | O=C(N... | 22.1 | 7.8 | 4 | In-house |

Phase II: Molecular Descriptor Calculation & Feature Selection

Objective: To generate numerical representations of molecular structures and select the most informative, non-redundant features for model building.

Protocol:

- Descriptor Calculation: Using standardized SMILES as input, compute a comprehensive vector of descriptors for each molecule. Common categories include:

- 1D/2D Descriptors: Molecular weight, logP (e.g., XLogP), topological indices, electronegativity, etc.

- 3D Descriptors: Requires geometry optimization (e.g., using MMFF94). Descriptors include molecular volume, polar surface area (TPSA), principal moments of inertia.

- Fingerprints: Binary bit vectors indicating presence/absence of structural patterns (e.g., ECFP4, MACCS keys).

- Descriptor Processing: Handle missing values (impute or remove), and scale/normalize continuous descriptors (e.g., StandardScaler).

- Initial Feature Filtering: Remove near-constant or duplicate descriptors.

- Feature Selection: Apply statistical and machine learning methods to reduce dimensionality and avoid overfitting:

- Univariate: Correlation analysis with the target variable.

- Multivariate: Recursive Feature Elimination (RFE), LASSO regression, or feature importance from tree-based models.

Key Data Table: Table 2: Subset of Calculated Molecular Descriptors for Five Compounds

| Compound ID | MW | XLogP | TPSA | NumHDonors | NumHAcceptors | NumRotatableBonds |

|---|---|---|---|---|---|---|

| CID_12345 | 330.4 | 2.1 | 72.5 | 2 | 6 | 7 |

| CID_67890 | 278.3 | 3.8 | 45.2 | 1 | 4 | 5 |

| CID_11223 | 412.5 | 1.4 | 110.3 | 3 | 8 | 10 |

Phase III: Model Building, Validation & Application

Objective: To construct predictive, interpretable, and statistically robust QSAR/QSPR models using curated data and selected features.

Protocol:

- Data Splitting: Partition data into training (~70-80%), validation (~10-15%), and a fully held-out test set (~10-15%). Use stratified splitting for classification. Apply chemical similarity checks to ensure no overly similar molecules are in both training and test sets.

- Algorithm Selection & Training:

- Linear Methods: Partial Least Squares (PLS) for descriptor-based models.

- Non-linear Methods: Random Forest (RF), Gradient Boosting Machines (e.g., XGBoost), or Support Vector Machines (SVM).

- Deep Learning: Graph Neural Networks (GNNs) operating directly on molecular graphs.

- Training: Optimize hyperparameters (e.g., grid/random search) using the validation set and cross-validation on the training set.

- Model Validation:

- Internal Validation: Report Q² (cross-validated R²) and RMSEcv for regression; cross-validated accuracy, precision, recall, AUC-ROC for classification.

- External Validation: Evaluate final model on the held-out test set. Report R²test, RMSEtest, and applicable classification metrics. This is the gold standard for assessing predictive power.

- Applicability Domain (AD): Define the chemical space where the model's predictions are reliable (e.g., using leverage, distance-based methods).

- Interpretation & Reporting: Analyze feature importance (e.g., PLS coefficients, RF feature importance) to derive chemically meaningful insights. Adhere to OECD principles for QSAR validation.

Visualization of Workflow

Title: QSAR/QSPR Model Building Workflow for PK Properties

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Software for QSAR/QSPR Modeling

| Item Name | Category | Primary Function |

|---|---|---|

| RDKit | Open-Source Cheminformatics Library | Core toolkit for chemical standardization, descriptor calculation, fingerprint generation, and molecular visualization. |

| Knime Analytics Platform | Workflow Automation | Graphical platform for constructing, executing, and documenting the entire data-to-model workflow without extensive coding. |

| Python Sci-Kit Learn | Machine Learning Library | Provides a unified interface for feature selection, model training (PLS, RF, SVM), validation, and metrics calculation. |

| MOE (Molecular Operating Environment) | Commercial Software Suite | Integrated suite for molecular modeling, simulation, and comprehensive descriptor calculation (including 3D). |

| ChEMBL Database | Public Bioactivity Data | Curated source of experimental drug discovery data, including PK parameters for thousands of compounds. |

| OECD QSAR Toolbox | Regulatory Software | Facilitates grouping of chemicals, filling data gaps, and profiling for regulatory purposes, aligning with OECD principles. |

| Jupyter Notebook | Development Environment | Interactive environment for scripting, data analysis, visualization, and sharing reproducible research narratives. |

| Docker | Containerization Platform | Ensures computational reproducibility by packaging the entire modeling environment (OS, libraries, code) into a container. |

Within the broader thesis on Quantitative Structure-Activity Relationship (QSAR) and Quantitative Structure-Property Relationship (QSPR) modeling for pharmacokinetic (PK) property research, machine learning (ML) algorithms have become indispensable. This document presents detailed application notes and experimental protocols for implementing four key ML algorithms—Random Forests, Support Vector Machines (SVM), Neural Networks, and Gradient Boosting—for predicting critical PK parameters such as bioavailability, clearance, volume of distribution, and half-life.

Research Reagent Solutions & Essential Materials

The following table details key software, libraries, and datasets essential for conducting ML-based PK prediction research.

| Item Name | Category | Function/Brief Explanation |

|---|---|---|

| ChEMBL Database | Dataset | A large-scale, open-access bioactivity database containing compound structures and curated ADMET/PK properties for model training and validation. |

| PubChem | Dataset | Public repository of chemical structures and biological activities, useful for feature generation and data augmentation. |

| RDKit | Software Library | Open-source cheminformatics toolkit for computing molecular descriptors (e.g., fingerprints, topological indices) and handling chemical data. |

| Dragon | Software | Commercial software for calculating a comprehensive set (>5000) of molecular descriptors for QSAR modeling. |

| scikit-learn | Software Library | Python ML library providing efficient implementations of Random Forests, SVM, and Gradient Boosting algorithms. |

| TensorFlow / PyTorch | Software Library | Deep learning frameworks for building and training complex neural network architectures. |

| ADMET Predictor | Software | Commercial platform specializing in predictive modeling of absorption, distribution, metabolism, excretion, and toxicity properties. |

| Python (v3.9+) | Programming Language | Primary language for scripting data preprocessing, model training, and evaluation pipelines. |

| Jupyter Notebook | Development Environment | Interactive environment for exploratory data analysis, model development, and result visualization. |

| MOE (Molecular Operating Environment) | Software | Integrated software for molecular modeling, simulation, and descriptor calculation in drug discovery. |

The table below summarizes comparative performance metrics of the four ML algorithms on benchmark PK prediction tasks, as reported in recent literature (2022-2024).

| Algorithm | Typical PK Endpoint | Reported R² (Test Set) | Reported RMSE | Key Advantages for PK Modeling | Common Limitations |

|---|---|---|---|---|---|

| Random Forest (RF) | Human Clearance, Bioavailability | 0.65 - 0.78 | 0.18 - 0.35 (log units) | Robust to outliers/noise; provides feature importance; minimal hyperparameter tuning. | Can overfit on noisy datasets; less interpretable than single trees. |

| Support Vector Machine (SVM) | Plasma Protein Binding, logD | 0.60 - 0.72 | 0.22 - 0.40 (log units) | Effective in high-dimensional spaces (many descriptors); strong theoretical foundation. | Performance sensitive to kernel choice and parameters; poor scalability to large datasets. |

| Neural Networks (NN) | Half-life, Volume of Distribution | 0.70 - 0.82 | 0.15 - 0.30 (log units) | Can model highly non-linear relationships; excels with large, complex datasets (e.g., molecular graphs). | Requires large data; prone to overfitting; "black-box" nature; extensive tuning needed. |

| Gradient Boosting (e.g., XGBoost) | Bioavailability, Metabolic Stability | 0.68 - 0.80 | 0.16 - 0.32 (log units) | High predictive accuracy; built-in regularization; handles mixed data types well. | More prone to overfitting than RF; sequential training is computationally intensive. |

Experimental Protocols

Protocol 3.1: Standard Workflow for ML-Based PK Prediction

This protocol outlines the generic workflow for developing a QSAR/QSPR model for a PK property using ML.

I. Data Curation & Preprocessing

- Source Data: Extract a compound dataset with associated experimental PK values (e.g., %F, CL, Vd) from a reliable database like ChEMBL.

- Curate Data: Apply stringent filters: remove duplicates, compounds with unreliable measurements, and extreme property outliers. Ensure a consistent experimental protocol for the endpoint.

- Split Data: Perform a stratified split (e.g., 70/15/15 or 80/10/10) into Training, Validation, and Hold-out Test Sets. Use clustering (e.g., on fingerprints) to ensure representative splits.

II. Molecular Featurization

- Compute Descriptors: Using RDKit or Dragon, calculate a wide range of molecular descriptors (1D, 2D, 3D) and fingerprints (e.g., Morgan, MACCS).

- Feature Preprocessing: Handle missing values (impute or remove). Apply Variance Thresholding to remove low-variance features.

- Feature Selection: Use methods like Recursive Feature Elimination (RFE) or Boruta with a Random Forest to select the most informative 100-300 descriptors to reduce dimensionality and avoid overfitting.

- Feature Scaling: Standardize features (e.g., StandardScaler) for SVM and Neural Networks. Tree-based methods (RF, GB) typically do not require scaling.

III. Model Training & Hyperparameter Optimization

- Algorithm Selection: Choose one or more of the four core algorithms.

- Define Search Space: Establish hyperparameter grids for optimization (see Protocol 3.2-3.5).

- Optimize: Use Bayesian Optimization or Grid Search with 5-Fold Cross-Validation on the Training Set. Use the Validation Set for early stopping and final model selection.

- Train Final Model: Retrain the model with the optimal hyperparameters on the combined Training + Validation set.

IV. Model Evaluation & Interpretation

- Predict & Evaluate: Apply the final model to the unseen Hold-out Test Set. Calculate key metrics: R², RMSE, MAE, and, if classification (e.g., high/low bioavailability), ROC-AUC, accuracy, precision, recall.

- Validate: Perform Y-randomization (scrambling target values) to confirm the model is not learning chance correlations.

- Interpret:

- Tree-based models: Analyze feature importance scores (Gini/permutation importance).

- Global: Apply SHAP (SHapley Additive exPlanations) or Partial Dependence Plots (PDP) to understand feature contributions across the dataset.

- Local: Use SHAP or LIME to explain individual predictions.

Protocol 3.2: Random Forest for Human Clearance Prediction

Specific Application: Predicting human hepatic clearance (log CL) using 2D molecular descriptors.

Detailed Methodology:

- Follow Protocol 3.1 for data curation. Aim for a dataset of >500 compounds with measured human in vivo clearance.

- Featurization: Compute an initial set of ~1000 2D descriptors (e.g., from RDKit). Apply correlation filtering (remove features with |r| > 0.95) and use Random Forest-based importance for final selection (~150 features).

- Hyperparameter Optimization (using scikit-learn

RandomForestRegressor):- Perform a Bayesian search over:

n_estimators: [100, 500, 1000],max_depth: [10, 30, None],min_samples_split: [2, 5, 10],min_samples_leaf: [1, 2, 4],max_features: ['sqrt', 'log2']. - Use 5-fold CV on the training set, optimizing for negmeansquared_error.

- Perform a Bayesian search over:

- Training: Train the optimized RF model. Extract and visualize feature importance.

Protocol 3.3: Support Vector Regression (SVR) for Plasma Protein Binding (PPB)

Specific Application: Predicting fraction unbound (log fu) using topological descriptors.

Detailed Methodology:

- Curate a dataset of >800 compounds with experimentally measured human PPB (% bound or fu).

- Featurization: Use a curated set of ~200 topological (2D) descriptors. Crucially, scale all features to zero mean and unit variance using the

StandardScalerfitted on the training data only. - Hyperparameter Optimization (using scikit-learn

SVRwith RBF kernel):- Perform a grid search over:

C: [0.1, 1, 10, 100],gamma: ['scale', 'auto', 0.01, 0.1]. - Use 5-fold CV on the scaled training set, optimizing for R².

- Perform a grid search over:

- Training & Evaluation: Train the optimized SVR model. Due to SVR's lack of inherent feature importance, use permutation importance on the test set for interpretation.

Protocol 3.4: Neural Network for Volume of Distribution at Steady State (Vss)

Specific Application: Predicting log Vss using extended-connectivity fingerprints (ECFPs).

Detailed Methodology:

- Assemble a dataset of >1000 compounds with measured rat or human Vss.

- Featurization: Use ECFP4 fingerprints (radius=2, 1024 bits) as input features. No scaling required for fingerprint bits.

- Network Architecture & Optimization (using TensorFlow/Keras):

- Design a Multilayer Perceptron (MLP) with 2-4 hidden layers (e.g., 512, 256, 128 neurons) with ReLU activation. Include Dropout layers (rate=0.2-0.5) after each hidden layer for regularization.

- Use the Adam optimizer with a learning rate of 0.001.

- Implement Early Stopping (patience=20) monitoring validation loss.

- Training: Train for up to 200 epochs with a batch size of 32. Use the validation set for early stopping. Apply the final model to the test set.

Protocol 3.5: Gradient Boosting (XGBoost) for Oral Bioavailability (%F) Classification

Specific Application: Classifying compounds as having high (>30%) or low (<30%) oral bioavailability.

Detailed Methodology:

- Curate a balanced dataset of >1200 compounds with clear binary bioavailability labels.

- Featurization: Use a mix of 200 physicochemical descriptors (logP, TPSA, HBD, HBA) and molecular fingerprints.

- Hyperparameter Optimization (using

XGBClassifier):- Perform a Bayesian search over:

n_estimators: [100, 500],max_depth: [3, 6, 9],learning_rate: [0.01, 0.05, 0.1],subsample: [0.7, 0.9],colsample_bytree: [0.7, 0.9]. - Use 5-fold stratified CV on the training set, optimizing for ROC-AUC.

- Perform a Bayesian search over:

- Training & Evaluation: Train the optimized model. Analyze results using the ROC curve, precision-recall curve, and SHAP summary plots for interpretation.

Visualizations

Diagram 1: ML-PK Model Development Workflow

Diagram 2: Neural Network Architecture for Vss Prediction

Diagram 3: Algorithm Selection Logic for PK Endpoints

Within the broader thesis on Quantitative Structure-Activity/Property Relationship (QSAR/QSPR) models for pharmacokinetic (PK) research, the accurate in silico prediction of specific PK endpoints is critical for accelerating drug discovery. This application note details protocols and modeling approaches for five key physicochemical and ADME properties: Lipophilicity (LogP), Aqueous Solubility (LogS), Permeability (including P-glycoprotein substrate identification), Cytochrome P450 Enzyme Inhibition, and Plasma Protein Binding.

Key Property Definitions & Data Ranges

Table 1: Summary of Key Pharmacokinetic Endpoints and Typical Data Ranges

| PK Endpoint | Common Symbol/Measure | Typical Range (Drug-like Molecules) | Primary Experimental Assay | QSAR Relevance |

|---|---|---|---|---|

| Lipophilicity | LogP (octanol-water) | -2 to 7 | Shake-flask, HPLC | High; foundational for other models |

| Aqueous Solubility | LogS (mol/L) | -12 to 2 | Kinetic/thermodynamic turbidimetry | High; depends on solid-state properties |

| Permeability (P-gp Substrate) | Efflux Ratio (ER) | ER > 2 = Substrate | Caco-2, MDCK-MDR1 | Moderate; complex protein-ligand interaction |

| CYP450 Inhibition | IC50 (µM) or % Inhibition at [I] | IC50: 0.1 - >100 µM | Fluorescent/LC-MS probe assay | High; crucial for DDI prediction |

| Plasma Protein Binding | % Bound (fu, fraction unbound) | 0.1% - 99.9% bound | Equilibrium dialysis, Ultrafiltration | Moderate; influenced by multiple factors |

Detailed Experimental Protocols

Protocol: High-Throughput Shake-Flask LogP Determination

Objective: To experimentally determine the octanol-water partition coefficient (LogP) for QSAR model training/validation.

Materials:

- Test compound (purified, known concentration stock in DMSO)

- n-Octanol (HPLC grade)

- Phosphate Buffered Saline (PBS, pH 7.4)

- 96-well deep-well polypropylene plates

- Plate shaker & centrifuge

- HPLC-MS system with UV/Vis detector

Procedure:

- Pre-saturation: Saturate PBS with octanol and octanol with PBS overnight. Use pre-saturated solvents for all steps.

- Sample Preparation: In a 2 mL deep-well plate, add 500 µL of octanol and 500 µL of PBS. Spike with test compound to a final concentration of 50-100 µM (DMSO ≤1% v/v).

- Equilibration: Seal plate, vortex vigorously for 10 minutes, then shake for 2 hours at 25°C.

- Phase Separation: Centrifuge at 3000 × g for 15 minutes.

- Quantification: Carefully sample 50 µL from each phase. Dilute as needed and quantify compound concentration in each phase using HPLC-UV/MS against a standard curve.

- Calculation: LogP = log₁₀(Concentrationoctanol / ConcentrationPBS).

Protocol: Kinetic Aqueous Solubility Assay (Nephelometry)

Objective: To determine the kinetic solubility of compounds in aqueous buffer.

Materials:

- Test compound (solid or DMSO stock)

- PBS (pH 7.4) or simulated intestinal fluid (FaSSIF)

- 96-well filter plates (e.g., 0.45 µm PVDF)

- Nephelometer or UV/Vis plate reader

- Compound library plate (10 mM in DMSO)

Procedure:

- Dispensing: Transfer 2 µL of 10 mM DMSO stock into a 96-well plate.

- Dilution: Add 198 µL of pre-warmed (25°C) buffer to each well (final [compound] = 100 µM, 1% DMSO). Seal and shake for 90 minutes.

- Filtration: Transfer the suspension to a filter plate and apply vacuum filtration to separate precipitated solid.

- Measurement:

- Nephelometry: Measure turbidity (light scattering) of the pre-filtered suspension directly. Compare to a standard curve of known suspensions.

- UV Quantification: Quantify the concentration of the filtrate using a UV standard curve (CLND or LC-MS for confirmation).

- Reporting: Report as kinetic solubility in µM or µg/mL. A turbidity value above baseline indicates precipitation.

Protocol: Caco-2/MDCK-MDR1 Permeability & P-gp Efflux Assay

Objective: To assess passive permeability and identify P-glycoprotein (P-gp) substrates.

Materials:

- Caco-2 or MDCKII-MDR1 cells (passage 25-40)

- Transwell inserts (12-well, 1.12 cm², 0.4 µm pore)

- Transport buffer (HBSS-HEPES, pH 7.4)

- Reference compounds: High Permeability (Metoprolol), Low Permeability (Furosemide), P-gp substrate (Digoxin)

- P-gp inhibitor (e.g., GF120918 or Verapamil)

- LC-MS/MS for quantification

Procedure:

- Cell Culture: Seed cells on Transwell inserts at high density. Culture for 21 days (Caco-2) or 5-7 days (MDCK-MDR1) until TEER > 300 Ω·cm².

- Bidirectional Transport:

- A-to-B (Apical to Basolateral): Add test compound (10 µM) to the apical chamber. Sample from the basolateral chamber over 120 minutes.

- B-to-A (Basolateral to Apical): Add test compound to the basolateral chamber. Sample from the apical chamber over 120 minutes.

- Inhibited Control: Repeat A-to-B and B-to-A transport in the presence of 10 µM P-gp inhibitor in both chambers.

- LC-MS/MS Analysis: Quantify compound concentrations in all samples.

- Calculations:

- Apparent Permeability, Papp (cm/s) = (dQ/dt) / (A * C₀)

- Efflux Ratio (ER) = Papp(B-to-A) / Papp(A-to-B)

- Interpretation: ER ≥ 2 suggests active efflux. Inhibition of ER by >50% with inhibitor confirms P-gp involvement.

Protocol: Cytochrome P450 Reversible Inhibition (IC50) Assay

Objective: To determine the half-maximal inhibitory concentration (IC50) for human CYP450 isoforms (3A4, 2D6, 2C9).

Materials:

- Human liver microsomes (pooled) or recombinant CYP enzymes

- CYP-specific fluorogenic or LC-MS probe substrates (e.g., Midazolam for CYP3A4)

- Co-factor solution (NADPH regeneration system)

- 96-well black optical-bottom plates

- Fluorescent plate reader or LC-MS/MS

Procedure (Fluorescence-Based):

- Incubation Setup: In a 96-well plate, prepare serial dilutions of test inhibitor in buffer. Add microsomes (0.1 mg/mL) and probe substrate (at ~Km concentration).

- Reaction Initiation: Start the reaction by adding NADPH regenerating system. Incubate at 37°C for 30-60 minutes.

- Reaction Termination: Stop with acetonitrile containing an internal standard (for LC-MS) or stop solution (for fluorescence).

- Detection: Measure fluorescence of the metabolite or analyze via LC-MS/MS.

- Data Analysis: Plot % enzyme activity (relative to uninhibited control) vs. log[Inhibitor]. Fit data to a sigmoidal dose-response curve to calculate IC50.

Protocol: Equilibrium Dialysis for Plasma Protein Binding

Objective: To determine the fraction unbound (fu) of a drug in plasma.

Materials:

- Human plasma (heparinized)

- Equilibrium dialysis device (e.g., HTD 96-well dialysis block)

- Dialysis membrane (12-14 kDa MWCO)

- PBS (pH 7.4)

- Test compound

- LC-MS/MS system

Procedure:

- Preparation: Pre-soak dialysis membranes in PBS for 10 minutes. Load one side (chamber) of the dialysis block with 150 µL of plasma spiked with test compound (e.g., 5 µM). Load the other side with 150 µL of PBS.

- Equilibration: Seal the dialysis block and incubate at 37°C with gentle agitation for 4-6 hours.

- Post-Dialysis Sampling: Carefully sample 50 µL from both the plasma and buffer chambers.

- Matrix Matching & Analysis: Add 50 µL of opposite matrix (buffer to plasma sample, plasma to buffer sample) to equalize matrix effects. Quantify drug concentrations in both sides using LC-MS/MS.

- Calculation: fu = Concentrationbuffer / Concentrationplasma. % Bound = (1 - fu) × 100.

Visualizations

Title: Interdependence of Key PK Properties in ADME Profiling

Title: Tiered Experimental Screening Workflow for Key PK Endpoints

Title: P-gp Mediated Efflux in a Bidirectional Permeability Assay

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Materials for PK Endpoint Assays

| Category/Item | Specific Example/Supplier (Illustrative) | Primary Function in PK Assays |

|---|---|---|

| Lipophilicity | n-Octanol (HPLC grade), Pre-saturated PBS | Provides the two-phase system for equilibrium partitioning measurement (LogP). |

| Solubility | 96-well Filter Plates (0.45 µm PVDF), Nephelometer | Enables high-throughput separation of precipitate and quantification of kinetic solubility. |

| Permeability | Caco-2 cells (ATCC HTB-37), MDCKII-MDR1 cells, Transwell inserts | Provide validated in vitro models of intestinal absorption and active efflux transport. |

| CYP Inhibition | Human Liver Microsomes (Pooled, 50-donor), NADPH Regeneration System, Isoform-specific Probe Substrates (e.g., Phenacetin for CYP1A2) | Source of metabolic enzymes and co-factors for measuring isoform-specific inhibition potency (IC50). |

| Protein Binding | HTD Equilibrium Dialysis Blocks (96-well), Dialysis Membranes (12-14 kDa MWCO), Blank Human Plasma | Gold-standard system for measuring the free fraction of drug in plasma at equilibrium. |

| Quantification | LC-MS/MS System (e.g., Sciex Triple Quad), Analytical Columns (C18) | Enables sensitive and specific quantification of drugs and metabolites in complex biological matrices. |

| Automation | Liquid Handling Robot (e.g., Tecan Freedom EVO) | Ensures precision and throughput for compound and reagent dispensing in 96/384-well formats. |

Integrating QSAR/QSPR Predictions into the Virtual Screening and Lead Optimization Pipeline

Application Notes

The integration of Quantitative Structure-Activity/Property Relationship (QSAR/QSPR) models into virtual screening (VS) and lead optimization pipelines represents a cornerstone of modern computer-aided drug design (CADD). Framed within a broader thesis on QSAR/QSPR for pharmacokinetic (PK) properties, this integration strategically de-risks the discovery process by prioritizing compounds with a balanced profile of potency and desirable ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) characteristics early in the pipeline.

Core Applications:

- Pre-filtering in Virtual Screening: Post-docking or alongside pharmacophore models, QSAR models for key PK properties (e.g., aqueous solubility, Caco-2 permeability, human liver microsomal stability) are used to filter massive virtual libraries. This prioritizes hits not only for target binding but also for drug-like character.

- Lead Series Prioritization: When multiple chemical series emerge from hit identification, consensus predictions from QSPR models for properties like plasma protein binding, volume of distribution, and clearance provide a quantitative basis for selecting the most promising series for synthesis.

- Guiding Synthetic Chemistry in Lead Optimization: As med chemists design new analogs, real-time predictions for target activity (QSAR) and ADMET properties (QSPR) inform structural modifications. This allows for the simultaneous optimization of potency and PK, reducing cycles of synthesis and costly late-stage attrition.

Data Integration Workflow: A successful integration hinges on an automated workflow where molecular structures from virtual libraries or proposed analogs are encoded into descriptors, fed into validated QSAR/QSPR models, and the predictions are aggregated into a multi-parameter optimization (MPO) score or displayed in a dashboard for easy decision-making.

Key Experimental Protocols

Protocol 1: Integrated Structure-Based Virtual Screening with ADMET Pre-Filtering

Objective: To identify dual-acting hits for a novel kinase target that possess not only predicted binding affinity but also a high probability of favorable oral PK.

Materials & Software: KNIME/Analytics Platform or Pipeline Pilot; Molecular docking software (e.g., AutoDock Vina, Glide); QSAR/QSPR model suite (e.g., SwissADME, admetSAR, or proprietary models); Compound library (e.g., ZINC, Enamine REAL).

Procedure:

- Library Preparation: Download or curate a virtual compound library (≈1-5 million compounds). Prepare 3D structures using a standardizer (e.g., RDKit). Apply basic property filters (150 < MW < 500, LogP < 5).

- Parallel Pre-Filtering: Execute in silico predictions in parallel:

- Step A (Docking): Dock prepped library into the target's crystal structure binding site. Retain top 100,000 compounds based on docking score.

- Step B (ADMET Prediction): For the entire prepped library, compute key ADMET properties using QSPR models: Human Intestinal Absorption (HIA), Caco-2 permeability, Solubility (LogS), and CYP3A4 inhibition.

- Intersection & Scoring: Intersect the top-ranked compounds from Step A and Step B (top 20% of each). For the intersected set, calculate an MPO score:

MPO Score = (F_Dock + F_HIA + F_Papp + F_Solubility) / 4WhereFrepresents a normalized score (0-1) for each parameter, with 1 being ideal. - Visual Inspection & Selection: Visually inspect the top 500 compounds by MPO score for binding mode novelty and synthetic accessibility. Select 50-100 for in vitro testing.

Protocol 2: In-Silico Lead Optimization Cycle for PK Properties

Objective: To improve the metabolic stability (human liver microsomal half-life, HLMs t1/2) of a lead compound (IC50 = 50 nM) while maintaining potency.

Materials & Software: MedChem design software (e.g., Chemicalize, Forge); QSAR model for target activity; QSPR model for microsomal stability; Electronic lab notebook (ELN).

Procedure:

- Establish Baselines: For the lead compound (L0), record experimental IC50 (50 nM) and HLMs t1/2 (10 min). Obtain corresponding in silico predictions from your models.

- Design Analogues: Generate a focused virtual library of 100 analogues based on L0, exploring modifications around metabolically labile sites (e.g., soft spots identified from metabolite prediction).

- Predictive Profiling: For each analogue, run predictions:

- QSAR Model: Predict pIC50.

- QSPR Model: Predict HLMs t1/2 (categorical: Low < 15 min, Medium 15-30 min, High > 30 min).

- Triaging & Synthesis: Apply a dual-parameter filter: (Predicted pIC50 > 6.3 [<200 nM]) AND (Predicted Stability = "High"). Rank filtered compounds by synthetic complexity. Propose the top 3-5 for synthesis.

- Iterate: Test synthesized compounds experimentally. Feed new data (L1, L2...) back into the models for refinement and initiate the next design cycle.

Summarized Quantitative Data

Table 1: Performance Metrics of Representative Open-Source QSPR Models for Key PK Properties

| Property | Model (Source) | Algorithm | Training Set (n) | Test Set Performance (R²/Accuracy) | Key Descriptors |

|---|---|---|---|---|---|

| Aqueous Solubility (LogS) | ESOL (Chemaxon) | Linear Regression | 2,873 | R² = 0.72 | MLogP, Molecular Weight, Aromatic Atoms |

| Caco-2 Permeability | admetSAR 2.0 | Random Forest | 1,302 | Accuracy = 0.92 | Topological polar surface area (TPSA), Papp, nHAcceptors |

| Human Liver Microsomal Stability | SwissADME | Bayesian | 6,500 (categorical) | Accuracy = 0.77 | LogP, TPSA, #Rotatable Bonds, #Aromatic heavy atoms |

| hERG Inhibition Risk | Pred-hERG 4.2 | Support Vector Machine | 5,984 | BACC* = 0.84 | pKa, LogD, #Basic nitrogens, FASA+ |

*BACC: Balanced Accuracy

Table 2: Impact of QSPR Pre-Filtering on Virtual Screening Enrichment (Hypothetical Case Study)

| Screening Scenario | Compounds Screened | Hit Rate (IC50 < 10 µM) | % of Hits with Desired Solubility (LogS > -5) | Attrition Saved in Later PK Screening |

|---|---|---|---|---|

| Docking Only | 100,000 | 1.2% | 35% | Baseline |

| Docking + QSPR Pre-filter | 20,000 | 1.5% | 82% | ~60% reduction in compounds requiring solubility assays |

Visualizations

Workflow for Integrating QSPR into Virtual Screening

QSAR/QSPR-Guided Lead Optimization Cycle

The Scientist's Toolkit: Key Research Reagent Solutions

| Tool/Resource | Type | Primary Function in QSAR/QSPR Integration |

|---|---|---|

| RDKit | Open-Source Cheminformatics Library | Generates molecular descriptors, fingerprints, and handles standard molecule I/O for feeding into models. |

| KNIME / Pipeline Pilot | Visual Workflow Automation Platform | Orchestrates the entire integrated pipeline, connecting docking, descriptor calculation, model execution, and data fusion steps. |

| SwissADME / admetSAR | Web-Based ADMET Prediction Suite | Provides readily implemented, robust QSPR models for key properties used in pre-filtering and prioritization. |

| Forge / MOE | Commercial Molecular Modeling Suite | Offers advanced QSAR model building tools and integrated descriptor fields for real-time prediction during compound design. |

| StarDrop | Multi-Parameter Optimization Software | Enables the creation of predictive panels and compound scoring functions that balance potency, PK, and toxicity predictions. |

| Electronic Lab Notebook (ELN) | Data Management System | Captures both predicted and experimental data, closing the feedback loop essential for model refinement and validation. |

Within the broader thesis on Quantitative Structure-Activity/Property Relationship (QSAR/QSPR) models for pharmacokinetic (PK) properties, this case study exemplifies the critical transition from in vitro or in silico descriptors to predicting in vivo human outcomes. Human hepatic clearance (CLH) and oral bioavailability (F) are pivotal parameters governing dosing regimens and efficacy. This application note details the protocols and models that integrate physicochemical properties, in vitro assay data, and advanced computational techniques to predict these complex, system-dependent PK parameters, thereby accelerating candidate selection and reducing late-stage attrition.

Predictive Models and Key Quantitative Data

Prediction strategies range from direct QSPR to mechanistic, physiology-based models. The following tables summarize established and emerging approaches.

Table 1: Summary of Prediction Methods for Human Hepatic Clearance (CLH)

| Method | Core Principle | Key Input Data | Typical Application & Notes |

|---|---|---|---|

| Direct QSPR | Statistical correlation between molecular descriptors and in vivo CLH. | 2D/3D molecular descriptors (e.g., logP, PSA, HBD). | Early screening. Limited by dataset congenericity. |

| In Vitro-In Vivo Extrapolation (IVIVE) | Scaling of intrinsic clearance (CLint) from hepatocytes or microsomes using liver size and blood flow. | In vitro CLint, human hepatocyte count (1.2×108 cells/g liver), liver weight (25 g/kg bw). | Industry standard. Incorporates the "well-stirred" liver model. |

| Physiologically-Based Pharmacokinetic (PBPK) | Multi-compartment model simulating drug disposition through mechanistic pathways. | Physicochemical properties, in vitro ADME data, human physiology parameters. | Gold standard for complex scenarios (e.g., DDIs, special populations). |

Table 2: Summary of Prediction Methods for Human Oral Bioavailability (F) F = Fa × Fg × Fh (Fraction absorbed × gut wall bioavailability × hepatic bioavailability)

| Component | Primary Prediction Method | Key Assays/Models | Commonly Used Tools/Software |

|---|---|---|---|

| Fa (Absorption) | QSPR models, Caco-2 permeability, PAMPA. | High-throughput permeability assays. | GastroPlus, Simcyp ADAM model. |

| Fg (Gut Metabolism) | IVIVE from intestinal microsomes or enterocytes. | CYP3A4/UGT reaction phenotyping in intestinal tissue. | Incorporation into PBPK models. |

| Fh (Hepatic Availability) | Derived from predicted CLH. | Fh = 1 - (CLH / QH), where QH is hepatic blood flow (~90 L/h). | Integrated outcome of CLH IVIVE. |

Table 3: Representative Performance Metrics of Published Models (Recent Examples)

| Predicted Endpoint | Model Type | Dataset Size | Key Descriptors/Inputs | Reported Performance (R²/Accuracy) |

|---|---|---|---|---|

| Human CLH | Machine Learning (Random Forest) | ~600 compounds | Molecular fingerprints, in vitro clearance, plasma binding. | Test set R² ≈ 0.65 |

| Human Oral F | Hybrid QSPR-PBPK | ~300 drugs | Calculated Fa, predicted CLH, in silico Fg. | Classified high/low F with >80% accuracy |

Experimental Protocols

Protocol 1: IVIVE for Human Hepatic Clearance from Cryopreserved Human Hepatocytes

Objective: To predict human in vivo hepatic clearance (CLH) from in vitro intrinsic clearance (CLint, in vitro) data.

Materials: See Scientist's Toolkit.

Procedure:

- Incubation Setup: Prepare a 1 µM test compound solution in hepatocyte incubation medium (≥1 million cells/mL). Include positive controls (e.g., 7-ethoxycoumarin) and vehicle controls.

- Time Course: Aliquot the incubation mixture into pre-warmed tubes. Incubate at 37°C with gentle shaking. Terminate reactions at predefined time points (e.g., 0, 15, 30, 60, 90, 120 min) by adding an equal volume of ice-cold acetonitrile containing internal standard.

- Sample Analysis: Centrifuge to pellet protein. Analyze supernatant using LC-MS/MS to determine parent compound depletion over time.

- Data Analysis:

- Plot Ln(% remaining) vs. time. The slope (k) is the depletion rate constant.

- Calculate in vitro CLint (µL/min/million cells): CLint, in vitro = k / (Cell count per µL).

- Scalin

g to Whole Liver:

- Scale to in vivo CLint (mL/min/kg): CLint, vivo = CLint, in vitro × Hepatocellularity (120 × 106 cells/g liver) × Liver weight (25.7 g/kg body weight).

- Apply Well-Stirred Model: CLH = (QH × fu × CLint, vivo) / (QH + fu × CLint, vivo), where QH = 90 L/h (human hepatic blood flow), fu = fraction unbound in blood.

Protocol 2: IntegratedIn SilicoPrediction of Oral Bioavailability